Hyperlambda is best understood as a tree-based, declarative, event-driven DSL designed to express agent tools, workflows, API logic, and automation as data structures that are directly executable.

Unlike most “agent frameworks” that treat tool definitions as metadata wrapped around imperative code (Python/JS), Hyperlambda treats the executable representation itself as structured data (an execution tree). This makes it unusually compatible with LLM-driven tool generation, validation, transformation, and composition.

The core abstraction

Hyperlambda is a “relational file format allowing you to create execution trees” rather than a classic programming language having a syntax like Python or C#.

Why that distinction matters for AI agents:

- The “program” is already AST-shaped (Abstract Syntax Tree).

- “Parsing” Hyperlambda doesn’t generate an AST as an intermediate representation—Hyperlambda is the AST.

- Every node is a (name, value, children) triple, recursively.

So the execution structure becomes:

- easy to inspect

- easy to diff/merge

- easy to validate statically

- easy to generate incrementally

- easy to persist in DB or to the file system as an “executable”

- easy to apply transformation passes to (rewrite rules)

This is the same reason why Lisp (homoiconicity) historically performed well in metaprogramming contexts—except Hyperlambda is built around a hierarchical node tree model rather than S-expressions.

Grammar

Hyperlambda’s fundamental token rules are as follows:

- node names and node values are separated by

: - values can be raw integer, floating point values, decimal values, strings, quoted strings, multiline strings with

@"...", etc.

This is important in AI generation because:

- The syntax surface area is tiny (few structural rules).

- There are far fewer ways to produce “almost valid” code (common LLM failure mode in Python/JS).

- The model can generate structurally correct programs without managing complex punctuation or statement boundaries.

Hyperlambda is essentially a whitespace-indented tree serialization format whose output is directly meaningful to runtime execution.

Execution semantics

Magic Cloud is a plugin system exposing “slots” and “nodes”, and even notes that they are “almost the same” in documentation conventions.

From an agent architecture perspective, this is extremely close to the canonical “tool calling” model:

- A tool has a name

- A tool receives arguments (structured)

- A tool returns structured output

- A tool invocation can be nested/composed

In Hyperlambda:

- a node name maps to a callable unit (a “slot” / function-like primitive)

- child nodes map to arguments / nested calls / structured configuration

- returned values can be re-bound into the tree context (depending on node semantics)

So instead of writing:

result = tool_a(x=123)

tool_b(result)

Hyperlambda represents code as a nested plan of execution.

This makes it natively compositional, which is one of the key pain points in agent programming when done imperatively (you end up orchestrating execution manually).

Hyperlambda is self-hosting

Magic includes explicit bidirectional transforms:

[hyper2lambda]converts Hyperlambda text → lambda hierarchy (execution tree)[lambda2hyper]converts lambda hierarchy → Hyperlambda text

Technically, this is a big deal:

4.1 Programmatic rewriting

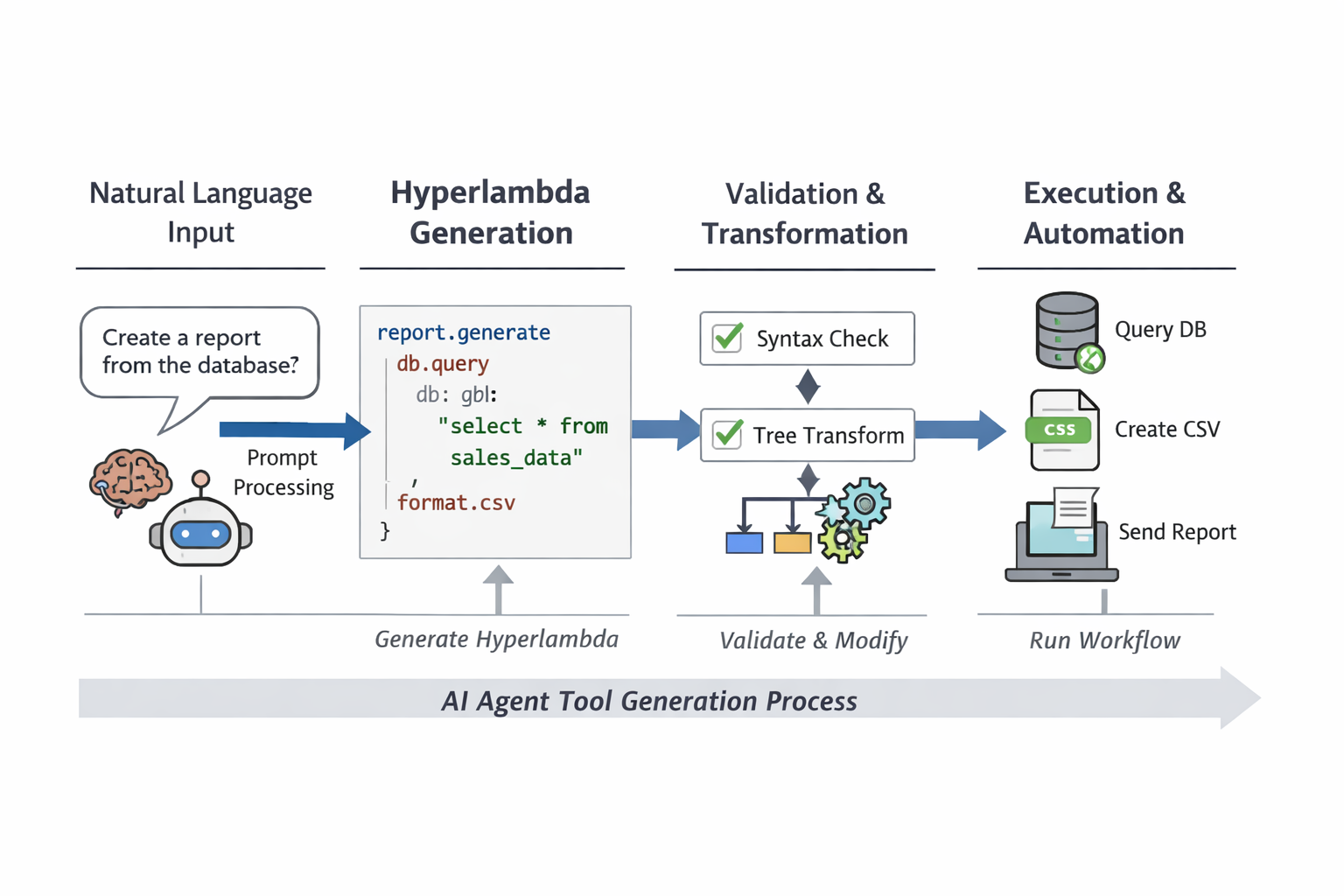

An AI agent can:

- Generate Hyperlambda text

- Parse it to a tree (

hyper2lambda) - Normalize/validate/patch it as data

- Generate canonical output (

lambda2hyper) - Execute it

This gives you a “compiler pipeline” style workflow without needing a full compiler toolchain.

Enforce structurally invariants

Instead of regex-based patching or brittle string transforms, you can apply rules like:

- “this node must exist”

- “this argument must be present”

- “this node name is disallowed”

- “these children must be ordered”

- “this subtree must match a schema”

This is exactly how reliable tool generation is usually built in serious agent systems: generate → parse → validate → repair → run. Hyperlambda makes that workflow trivial because the program is already shaped like data.

Workflows are code

Magic Cloud implements workflows using Hyperlambda. This removes the need for a visual workflow GUI, because the source is the primary representation. In technical terms, this implies:

- A workflow is just a stored executable tree

- The “workflow engine” can interpret/execute Hyperlambda nodes

- The same representation works for:

- HTTP endpoints

- scheduled jobs / tasks

- triggered workflows (event-driven)

Magic’s task manager also allows for tasks as background jobs that are persisted in a database as Hyperlambda, triggered or scheduled. This yields a clean execution model for AI agents:

- Agents produce workflows as data

- The system stores them as executable artifacts

- The runtime schedules/triggers them without re-interpreting an external orchestration format (like BPMN/XML)

So Hyperlambda becomes the single intermediate representation for “agent intent → executable automation”.

The Hyperlambda “agent toolchain” is built into the platform

- Hyperlambda Playground: submit Hyperlambda to server, execute immediately

- Hyperlambda Generator: generate backend APIs from natural language

So the environment includes:

- interactive execution (“REPL-like” but for server-side operations)

- a natural-language-to-DSL generator

From an agent engineering view, this is basically:

- planning (generate Hyperlambda)

- execution (run Hyperlambda)

- inspection/debug (playground)

- persistence and scheduling (tasks)

That’s the complete loop you usually need external tools to glue together (LLM + orchestrator + job runner + tool registry + persistence layer).

Plugins as typed capability surfaces

Magic Cloud’s plugin list contains a large set of deterministic capabilities exposed to Hyperlambda, including but not limited to:

- HTTP invocation

- JSON/XML/CSV parsing and generation

- auth/validators/scheduler/logging/caching

- DB adapters (MySQL/Postgres/MSSQL/SQLite/ODBC)

- OpenAI-specific slots

So instead of agent tools being arbitrary scripts, you get a curated capability surface.

This matters because in production agent systems, reliability comes from:

- tool availability guarantees

- predictable input/output formats

- restricted side effects

- repeatable execution

Hyperlambda pushes you toward “tools as primitives” rather than “tools as code”.

Why LLMs handle Hyperlambda better than Python (mechanically)

This is not about “LLMs like it” as a claim—it’s about failure modes.

Python/JS failure modes in agent execution

Typical LLM mistakes:

- syntax errors (indentation, commas, missing parens)

- wrong imports

- dependency mismatch

- runtime type issues

- environment drift

- partial code generation

Hyperlambda reduces failure modes by design

Because Hyperlambda:

- has minimal syntax

- expresses execution structure directly as a tree

- composes through nesting, not variable scopes and statements

- uses a stable plugin/tool registry model

- supports parsing/generation transforms for automatic repair

This creates a highly constrainable output space, which is exactly what you want when the author is probabilistic (an LLM).

What makes Hyperlambda “agent-native” vs “workflow-native”

A lot of DSLs are workflow DSLs. Hyperlambda goes further by aligning with the agent tool lifecycle:

Agent lifecycle (practical)

- interpret user intent

- plan tool calls

- validate tool call graph

- execute tool calls

- persist the resulting automation

- schedule or trigger later execution

- modify/patch over time

Hyperlambda supports this directly because:

- the plan is an execution tree

- it can be parsed and regenerated structurally

- workflows/tasks are persisted in Hyperlambda form

- workflows are maintained as source, not GUI serialization

That combination is what makes it closer to “AI agent programming language” than a typical DSL that only describes a pipeline.

The practical “agent programming language”

If we define an AI agent programming language as something that enables:

- structured tool composition

- machine generation

- machine validation

- incremental repair

- safe execution constraints

- persistent, schedulable automation artifacts

Hyperlambda fits unusually well because it is:

- a tree-shaped executable representation

- with stable primitives via plugins/tools

- and native parse/generate transforms to switch between text/tree

- and built-in workflow + scheduling persistence

In other words: it’s not “Python, but shorter”.

It’s closer to: a constrained, executable IR for tool-based systems that happens to have a readable textual form.

Conclusion

Hyperlambda is best viewed as:

an executable, hierarchical, declarative tool orchestration IR that is directly compatible with LLM generation, program rewriting, and workflow persistence.

That’s why it behaves like an AI agent programming language in practice: it doesn’t fight the agent tool model—it is the agent tool model, serialized as a tree.

Or to simplify …

- “Natural language → Hyperlambda tool → validation → execution”

- designing safe tool sandboxes in Hyperlambda

- structuring a tool registry so the LLM never emits “unknown nodes”

- patterns for idempotent and retriable Hyperlambda agent tasks

You can find Magic Cloud here (open source).